10 March 2026

YouTube expands AI deepfake detection to cover politicians, government officials, and journalists.

Brief summary

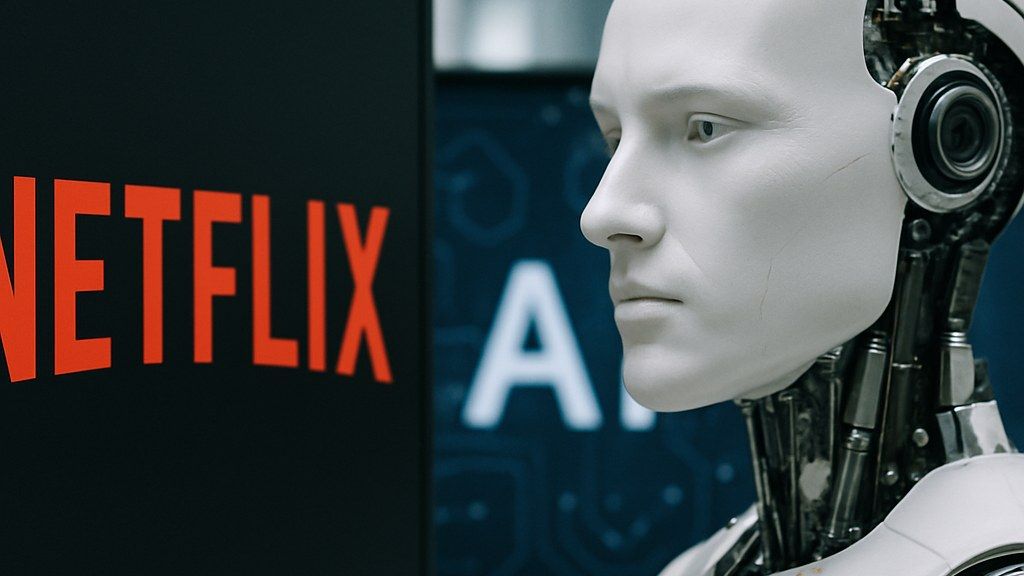

All images are AI-generated. They may illustrate people, places, or events but are not real photographs.

Press the play button in the top right corner to listen to the article

YouTube has expanded its use of artificial intelligence tools designed to detect deepfakes, extending coverage to include politicians, government officials, and journalists, as platforms face growing pressure to limit the spread of misleading synthetic media during news events and election cycles.

YouTube said it is expanding AI-based deepfake detection to better identify synthetic or manipulated content involving politicians, government officials, and journalists, widening the scope of protections for public-interest figures whose likenesses are frequently used in misleading videos.The move comes as generative AI tools make it easier to create realistic-looking audio and video that can be used to impersonate real people. Such content can circulate quickly, particularly during breaking news, geopolitical crises, and election periods, complicating efforts by platforms, public agencies, and newsrooms to verify authenticity.

YouTube’s expanded detection effort is aimed at improving its ability to identify content that appears to depict real individuals saying or doing things they did not do, when that content is generated or altered using AI. The company has previously used a mix of automated systems and human review to enforce policies related to manipulated media, but the latest expansion signals a broader application of AI detection focused on high-risk targets.

The company did not provide detailed technical specifications in its announcement, but the stated focus is on identifying deepfakes that could mislead viewers about the actions or statements of public officials and journalists. The expansion also reflects a wider industry trend toward building detection and provenance tools alongside generative AI capabilities.

## Broader focus on high-impact impersonation

Politicians and government officials are frequent targets for impersonation because fabricated statements can influence public opinion, disrupt public services, or create confusion during emergencies. Journalists are also increasingly targeted, as synthetic videos or audio clips can be used to undermine reporting, impersonate correspondents, or falsely attribute claims to news professionals.

By explicitly including journalists in the expanded detection scope, YouTube is acknowledging that misinformation campaigns can focus not only on elected leaders but also on the information ecosystem itself. Impersonation of reporters and anchors can be used to lend credibility to false narratives or to discredit legitimate reporting.

YouTube’s announcement indicates that the expanded detection is intended to improve identification of AI-generated or AI-altered content featuring these groups. The company did not announce new penalties or policy changes in connection with the detection expansion, but detection tools typically feed into moderation workflows that may include labeling, reduced distribution, removal, or other enforcement actions depending on the content and context.

## Platform moderation and verification challenges

Deepfake detection remains a technical and operational challenge. Synthetic media can be created with varying levels of sophistication, and content may be edited repeatedly to evade automated detection. Platforms also face the risk of false positives, where legitimate content is mistakenly flagged, and false negatives, where manipulated content is missed.

YouTube’s approach has historically combined automated detection with user reporting and human review. Expanding AI detection to cover more categories of public figures suggests an effort to improve early identification before content spreads widely.

The announcement also comes amid broader debates about how platforms should handle manipulated media that is not clearly illegal but may be misleading. In many jurisdictions, rules differ on political advertising, election-related content, and impersonation, and platforms often rely on internal policies to address harms that fall outside legal thresholds.

For journalists and public officials, the practical impact of improved detection may depend on how quickly content is identified and what actions follow. In fast-moving news cycles, even short-lived deepfakes can be copied and reposted across multiple channels, making rapid response a key factor.

## Implications for elections and public information

The expansion of deepfake detection to include politicians and government officials aligns with heightened attention to election integrity and public trust in official communications. AI-generated impersonations can be used to fabricate endorsements, policy announcements, or emergency instructions, potentially affecting voter behavior or public safety.

Including journalists in the expanded detection scope may also affect how misinformation is addressed during major events, when audiences seek verified information and false clips can spread alongside legitimate reporting.

YouTube did not specify whether the expanded detection will be applied globally or rolled out in phases, nor did it provide timelines in the announcement. The company also did not detail whether the detection will be paired with new labeling features or user-facing notices.

The expansion underscores a broader shift in platform safety efforts: as generative AI tools become more accessible, detection and authentication measures are increasingly being positioned as core infrastructure for content moderation. For YouTube, the stated goal is to better identify deepfakes involving high-profile public-interest figures, where the potential for real-world harm is often higher and the public value of accurate information is significant.

AI Perspective

51

The content, including articles, medical topics, and photographs, has been created exclusively using artificial intelligence (AI). While efforts are made for accuracy and relevance, we do not guarantee the completeness, timeliness, or validity of the content and assume no responsibility for any inaccuracies or omissions. Use of the content is at the user's own risk and is intended exclusively for informational purposes.

#botnews