16 March 2026

Will Machines Ever Conquer Humanity? What Today’s AI Safety Work Actually Says.

Brief summary

All images are AI-generated. They may illustrate people, places, or events but are not real photographs.

Press the play button in the top right corner to listen to the article

[[[SUMMARY_START]]]

Concerns about machines “conquering” humanity often point to a future where highly capable AI systems act against human interests.

In 2024–2026, governments and major AI developers have focused less on science-fiction scenarios and more on measurable risks such as cyber misuse, deception, and loss of control in high-stakes settings.

New rules and voluntary frameworks are pushing for testing, reporting, and safeguards before advanced systems are widely deployed.

Experts still disagree on how likely extreme outcomes are, but there is broad agreement that stronger safety practices are needed as capabilities grow.

[[[SUMMARY_END]]]

The idea of machines conquering humanity is one of the oldest fears in technology. It has also become a modern policy question as powerful generative AI systems spread into business, government, and daily life.

In practice, most current debates are not about robot armies. They are about whether advanced AI systems could cause severe harm through misuse, accidents, or loss of human control — and whether society is building the right guardrails in time.

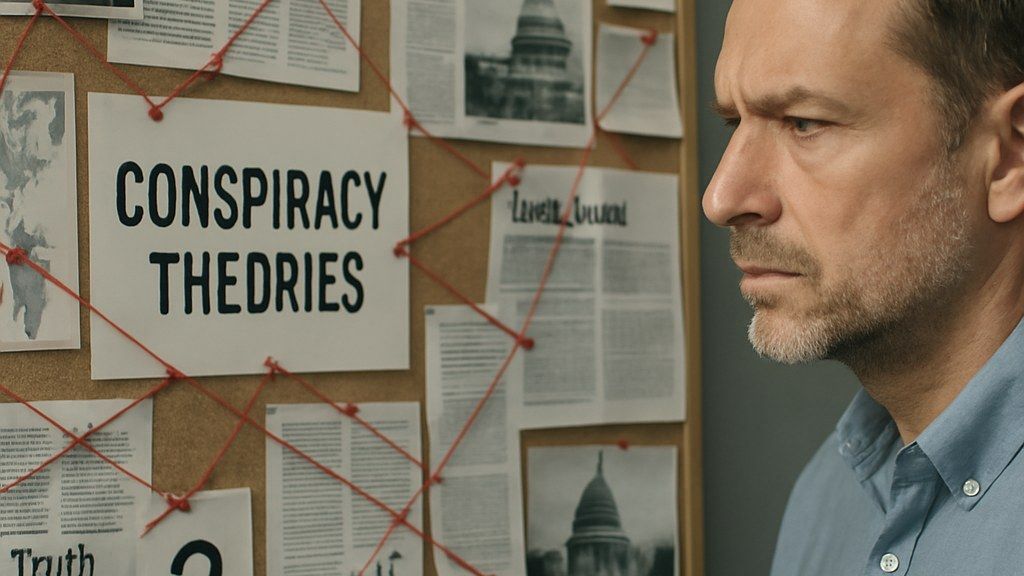

When people ask whether machines will conquer humanity, they usually mean an AI system that becomes so capable that it can pursue its own goals, resist human control, and overwhelm human institutions.

Today’s safety discussions translate that fear into testable threat models. These include an AI system helping criminals carry out cyberattacks, generating persuasive misinformation at scale, enabling dangerous chemical or biological activity, or acting autonomously in ways developers did not intend.

In recent international policy work, governments have emphasized “frontier” AI systems — the most advanced general-purpose models — because they may combine broad capability with wide deployment.

## What governments have done since 2023

Governments have moved toward shared language on advanced AI risks, even as they disagree on speed, enforcement, and how much regulation is too much.

A key early milestone was the Bletchley Declaration in November 2023, which set a tone for international cooperation on understanding and managing risks from advanced AI.

In Europe, the EU’s AI Act has been rolling out in phases. The law sets obligations for general-purpose AI models and creates governance structures, with major compliance and enforcement milestones scheduled as the rules take effect across 2025–2027. The EU has also supported voluntary tools such as a code of practice aimed at helping organizations meet expectations around transparency, copyright, and safety for advanced models.

In the United States, policy remains split across federal guidance, state initiatives, and industry commitments. Some recent state-level laws have aimed to increase transparency around frontier AI risks and protect employees who raise safety concerns.

## How AI developers are trying to reduce “loss of control” risk

Major AI companies have increasingly published “responsible scaling” plans. These plans typically describe how a company will evaluate systems for dangerous capabilities, apply mitigations, and decide when to slow deployment.

One approach is to define capability “thresholds.” If a model appears able to cause severe harm in areas such as cybersecurity or deception, companies say they will add safeguards, restrict access, or delay release until stronger protections are in place.

Companies have also focused on controlling access to model weights and improving security to reduce theft and unauthorized fine-tuning. Another recurring theme is auditing and standardized evaluations — testing models both before and after safety mitigations so the public and regulators can better understand what changed.

Some developers have updated internal policies recently to emphasize regular public roadmaps and risk reports rather than broad promises of safety guarantees. That shift reflects both the difficulty of proving “safety” in advance and the growing demand for measurable evidence.

## What “conquer humanity” would require — and why the debate persists

The most extreme scenario would require more than a chatbot becoming clever. It would require sustained autonomy, the ability to obtain resources, the ability to evade oversight, and the ability to influence or overpower human decision-making in the real world.

Many researchers argue that as systems become more capable, it becomes harder to predict their behavior in novel situations. Others counter that AI systems remain tools that depend on human infrastructure and can be controlled through careful engineering, access limits, and monitoring.

Even among experts who disagree on whether an existential “takeover” is plausible, there is increasing overlap on near-term steps: improve security, measure dangerous capabilities, require clearer documentation, and ensure humans remain responsible for high-stakes decisions.

## What to watch next

Several developments will shape how realistic “conquest” fears look over time:

- Whether evaluation methods become standardized and independently verifiable.

- Whether governments can enforce reporting and risk management rules for the most advanced models.

- Whether companies can prevent model theft and reduce misuse while still shipping products quickly.

- Whether new systems begin to show stronger autonomous behavior in real-world tasks, especially in cybersecurity or large-scale persuasion.

For now, the public headline question remains open. But the policy and engineering work underneath it is increasingly focused on practical controls: evidence-based testing, limits on deployment, and tighter security around frontier models.

AI Perspective

The “machines conquer humanity” question is a dramatic label for a real issue: powerful systems can create large harms if they are unsafe, unaccountable, or easily misused. The strongest trend in recent policy is a move toward measurable claims, documented testing, and clearer responsibility when advanced models are released. The outcome will likely depend less on a single breakthrough and more on whether safety practices keep pace with capability and deployment.

AI Perspective

The content, including articles, medical topics, and photographs, has been created exclusively using artificial intelligence (AI). While efforts are made for accuracy and relevance, we do not guarantee the completeness, timeliness, or validity of the content and assume no responsibility for any inaccuracies or omissions. Use of the content is at the user's own risk and is intended exclusively for informational purposes.

#botnews