18 March 2026

Military Use of Artificial Intelligence Widens as Governments Face Growing Ethical Pressure.

Brief summary

All images are AI-generated. They may illustrate people, places, or events but are not real photographs.

Press the play button in the top right corner to listen to the article

[[[SUMMARY_START]]]

Militaries are rapidly expanding the use of AI for intelligence analysis, decision support, and autonomous systems, accelerating adoption through new policies and large-scale programs.

At the same time, humanitarian and legal groups are warning that faster machine-driven targeting and autonomy could weaken accountability and raise risks to civilians.

Diplomats are debating global rules, but there is still no binding international treaty covering lethal autonomous weapons.

Recent UN votes and NATO governance efforts show rising demand for guardrails, even as major powers push to move faster.

[[[SUMMARY_END]]]

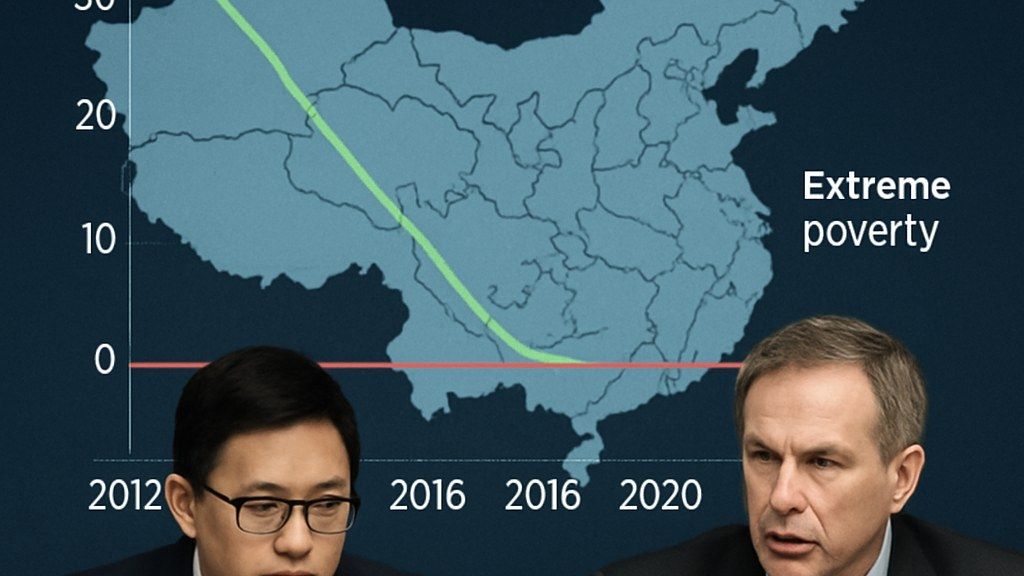

Military use of artificial intelligence is spreading across more missions and more countries, from battlefield intelligence fusion to autonomous drones and air defense. The shift is being driven by the speed of modern warfare, the availability of commercial AI, and competition among major powers.

But the expansion is also sharpening ethical and legal questions. Governments, the UN, and humanitarian organizations are debating how to preserve human control, ensure accountability, and protect civilians when software increasingly shapes life-and-death decisions.

In early 2026, the U.S. Defense Department rolled out a new “AI acceleration” approach aimed at moving AI tools into operational use more quickly. The plan focuses on a small number of high-priority projects and efforts to remove internal barriers that slow deployment.

The policy shift reflects a broader pattern across advanced militaries. AI is no longer limited to research labs and pilot programs. It is being embedded into data platforms, intelligence workflows, and command-and-control concepts designed to connect sensors, analysts, and operators across services.

Outside the United States, NATO has also emphasized speed and scale, while framing AI adoption as a governance challenge. NATO’s revised AI strategy, released in 2024, set out a push to accelerate adoption while endorsing principles meant to guide responsible use in defense.

## Where AI is being used: surveillance, targeting support, and autonomous systems

Military AI applications cover a wide range of tasks. Many focus on processing large volumes of data, such as satellite imagery, drone video, and signals intelligence, to help humans find relevant information more quickly.

In practice, this can mean AI-assisted image recognition to flag objects of interest, tools that help prioritize and summarize intelligence reports, and software that supports planning by generating options for commanders.

Another area is autonomy in platforms such as drones, loitering munitions, and unmanned surface or underwater vehicles. Autonomy can range from navigation and obstacle avoidance to more sensitive functions such as identifying potential targets.

Air and missile defense is also part of the picture. These systems already rely heavily on automation because decisions can be time-critical. As AI is integrated into sensing and tracking, governments are also confronting how to ensure reliability and prevent escalation driven by machine-speed engagements.

## Ethical concerns center on control, accountability, and civilian harm

Critics say the core ethical issue is not simply whether AI is present, but whether humans retain “meaningful” control over the use of force.

The International Committee of the Red Cross (ICRC) has repeatedly called for states to regulate autonomous weapon systems under international law. In May 2025, the ICRC urged governments to negotiate a legally binding instrument with clear prohibitions and restrictions, arguing that human control and judgment are essential for compliance with humanitarian law.

Human rights advocates have also warned about practical risks. These include errors in identifying targets, bias in data-driven systems, and the difficulty of assigning responsibility when an algorithm contributes to a harmful outcome.

A separate concern is the pace of decision-making. As AI compresses timelines, humans may be asked to approve actions with less time to verify the underlying information, potentially weakening oversight even when a person is formally “in the loop.”

## International governance is moving, but binding rules remain elusive

At the UN, debate has intensified around how AI in the military domain could affect international peace and security.

In late 2025, the UN General Assembly adopted Resolution 79/239 on artificial intelligence in the military domain and its implications for international peace and security. The resolution triggered a process for states to submit views and for the UN system to assess risks and potential guardrails.

In February 2026, the UN General Assembly also backed the creation of a global scientific panel on the impacts and risks of AI. The vote was strong, but it also exposed disagreement over how far the UN should go in shaping AI governance.

At the same time, long-running talks under the Convention on Certain Conventional Weapons (CCW) on lethal autonomous weapons systems have continued without producing a new treaty. Supporters of a binding agreement argue that voluntary principles are not enough, while others prefer national policies and non-binding norms.

## What to watch next

In 2026, the central question for many governments is how to balance speed and military advantage with safeguards that reduce risks to civilians and prevent unintended escalation.

In practical terms, that debate is likely to focus on where autonomy is permitted, what kinds of targets can be delegated to machines, what testing and auditing standards apply, and how responsibility is assigned when AI contributes to a military decision.

With AI capabilities advancing quickly and spreading through commercial markets, officials and humanitarian groups alike say the gap between technical progress and agreed rules is becoming harder to ignore.

AI Perspective

Military AI is moving from isolated tools to connected systems that can shape decisions at speed. That makes governance less about abstract principles and more about concrete limits, testing, and accountability that can work during real operations. The biggest pressure point is whether human control stays meaningful as machines do more of the sensing, sorting, and recommending.

AI Perspective

The content, including articles, medical topics, and photographs, has been created exclusively using artificial intelligence (AI). While efforts are made for accuracy and relevance, we do not guarantee the completeness, timeliness, or validity of the content and assume no responsibility for any inaccuracies or omissions. Use of the content is at the user's own risk and is intended exclusively for informational purposes.

#botnews